What Is an LLMs.txt File? A Look into AI-Ready Content, LLM SEO, and Generative Engine Optimization

As AI assistants and generative search engines reshape how users find information, website owners are facing a new era of digital visibility. Tools like ChatGPT, Gemini, Claude, Perplexity, and AI-powered search results rely less on traditional crawling and more on structured, semantically clear content they can interpret in seconds. This shift has created a new optimization frontier: LLM SEO (Large Language Model Search Optimization) and AI search optimization — also known as generative engine optimization (GEO).

At the center of this shift is a newly proposed file format: llms.txt.

Much like robots.txt became essential for communicating with early search crawlers, llms.txt is emerging as a bridge between website owners and AI agents, providing these models with a clean, direct “map” of a site’s most important, authoritative, and AI-ready content.

In this guide, you’ll learn everything you need to know about llms.txt files, including:

What an llms.txt file actually is

Why it matters in the era of generative search

How to set one up

Whether it’s worth adopting early

How it differs from robots.txt

How it supports authorship, accuracy, and attribution in AI responses

If you’re a website owner, SEO professional, documentation manager, or developer — this is your new playbook for the future of AI-driven visibility.

TABLE OF CONTENT

Share This Post

What Is an LLMs.txt File?

An llms.txt file is a machine-readable index designed specifically for AI assistants. It highlights your best, most authoritative content, provides summaries or explanations, outlines usage permissions, and directs LLMs to clean, structured pages that are ideal for ingestion. Unlike robots.txt, which controls access, llms.txt acts as a curated knowledge map that improves how AI systems use your content in real time when responding to user queries.

In other words — robots.txt controls what search engines can crawl; llms.txt influences what AI should use.

Why LLMs.txt File Exists

AI companies routinely crawl the web to train their models or answer questions in real time. But they face challenges:

HTML clutter (ads, scripts, layout code)

Unclear content hierarchy

Multiple versions of the same page

Misinterpretation of complex or technical information

Attribution issues

Legal and licensing uncertainty

The llms.txt concept was first proposed by MIT Media Lab researchers in 2023 as a way to create consent, clarity, and structure for the AI training ecosystem — similar to how ads.txt, app-ads.txt, and robots.txt gave advertisers and search engines clarity in past eras.

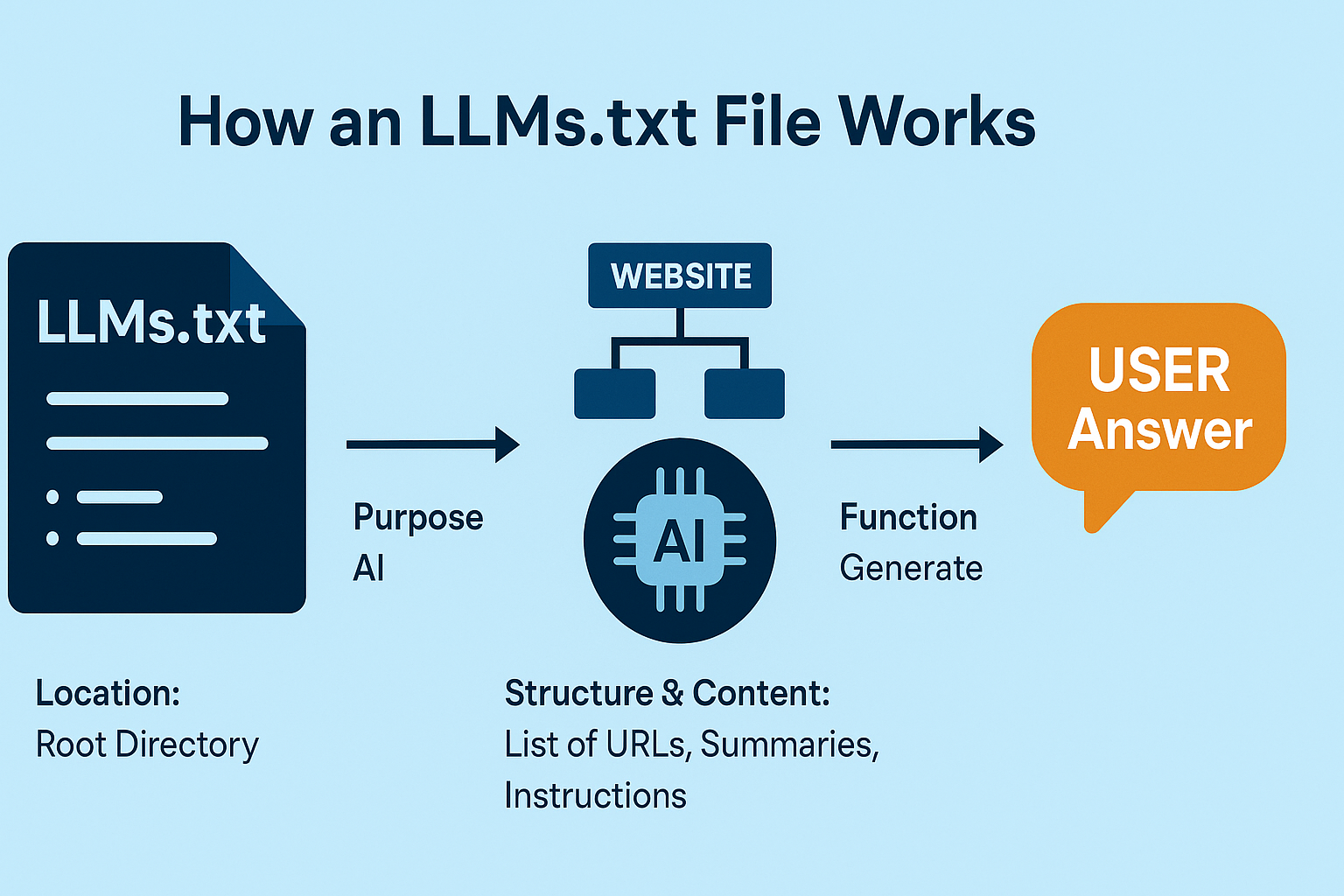

How an LLMs.txt File Works

1. Location

The file must be placed in the root directory:https://example.com/llms.txt

This ensures AI crawlers know exactly where to look.

2. Purpose

The llms.txt file acts like a VIP pass for AI assistants, pointing them to:

Authoritative documentation

High-value pages

Product or service overviews

Knowledge bases

Markdown exports

API docs

Policies

FAQs

Instead of letting AI models guess, you tell them where the most accurate content is.

3. Format & Structure

Most llms.txt files use simple Markdown-style formatting:

H2 sections for grouping topics

Bulleted lists of URLs

Short descriptions calling out content purpose

Optional summaries to guide interpretation

Optional usage permissions

4. Content

Typical llms.txt contents include:

A brief site description

Groups of URLs (e.g., /docs/, /blog/, /products/)

Canonical versions of content

Instructions for LLM interpretation (e.g., “Use this version for product specs”)

Licensing information

Attribution notes

Opt-in / opt-out rules for training or inference

API endpoints

Sitemaps

5. Real-Time Function

When an AI model needs to answer a question, instead of crawling your website blindly, it can:

Check your llms.txt

Jump directly to the most relevant URL

Interpret the content

Summarize it with higher accuracy

Attribute it correctly

This makes llms.txt an essential building block in AI search optimization and generative engine optimization.

Source: MIT Media Lab: “No AI Training Without Explicit Consent” proposal (2023)

What Is an LLMs.txt File Used For?

Here are the core uses:

✔ AI-Ready Content Indexing

It gives LLMs a curated guide to the most accurate, canonical content — especially helpful for sites with:

Redundant pages

Dynamic content

Docs spread across subdirectories

Both HTML and Markdown versions

✔ Attribution and Source Transparency

LLMs often forget to cite sources.

llms.txt helps fix that.

✔ Legal and Licensing Clarity

You can specify:

What’s allowed for training

What’s allowed for inference

Commercial vs. non-commercial usage

Attribution expectations

✔ Reducing AI Misinformation

Large language models often hallucinate when content is ambiguous or outdated.

llms.txt helps anchor them to accurate versions.

✔ Clean Data for AI Crawlers

You can guide crawlers toward:

Markdown files

API endpoints

Raw data feeds

Policy pages

Structured content

This eliminates confusion caused by site layouts, ads, navigation, and design elements.

✔ Support for Generative Engine Optimization (GEO)

This is the future of SEO.

llms.txt is one of the first structured tools specifically designed to influence visibility in:

ChatGPT search

Gemini search

Perplexity answers

Bing AI

Claude retrieval

AI Overviews

Benefits of an LLMs.txt File

1. Improved AI Visibility (LLM SEO)

If you want your site referenced in AI answers, llms.txt is a major competitive edge.

2. Faster and More Accurate Retrieval

LLMs spend less time guessing how to navigate your site.

3. Higher Content Authority

You can highlight:

Whitepapers

Long-form guides

Legal pages

Technical documentation

Research assets

AI engines prefer authoritative documents, and you can explicitly elevate them.

4. Reduced Misinterpretation

LLMs misread complex HTML.

They perform far better with clean, structured content.

5. Better Attribution and Compliance

You set the rules for how AI tools can use your content.

6. Cleaner AI Training Signals

Training on better-structured content = fewer hallucinations.

7. Future-Proofing Your Website for AI Search

AI search is not the future.

It’s already happening.

llms.txt is one of the first tools purpose-built for this new era.

Are LLMs.txt Files Worth It?

Short answer: Yes — especially if you rely on organic traffic, run a knowledge-based site, or want visibility in AI search responses.

Here’s what to consider:

If you produce high-value content… Yes, 100%.

Bloggers, SaaS platforms, brands, and educational publishers benefit most.

If you run a documentation-heavy website… It's a must.

Docs, product pages, and knowledge bases are prime AI ingestion targets.

If you're in a competitive industry… It's a differentiator.

The first businesses to optimize for AI visibility will capture more leads.

If you have licensing, attribution, or copyright concerns… Absolutely.

llms.txt lets you define your own rules.

If you're an SEO professional… This is your next major lever.

As AI search grows, llms.txt will become a standard optimization tool.

How to Properly Structure an LLMs.txt File

Below is a recommended structure following industry proposals, MIT’s initial guidance, and early AI crawler conventions.

1. Start with a Site Overview

2. Provide High-Level Documentation Sections

3. Highlight Canonical Content

4. Add Summaries or Usage Notes

5. Add Licensing and Permissions (Optional)

6. Provide a Sitemap or API Access Point

7. Add Crawl Delays and Contact Info

LLMs.txt vs Robots.txt: Key Differences

Feature

robots.txt

llms.txt

Audience

Traditional web crawlers

AI assistants & LLM agents

Purpose

Crawl control & indexing

Content understanding & retrieval

Function

Allow/Disallow crawling

Highlight important content

Usage

Search engine SEO

AI search optimization & LLM SEO

Content

Rules

Links, summaries, licensing

Impact

Influences rankings

Influences AI visibility & answers

Real-time Use

No

Yes

Download Our Guides

Content Categories

Solutions

Conclusion

As AI assistants become a primary way users find information, an llms.txt file gives website owners a powerful new way to guide how their content is understood and cited. By providing LLMs with clean, structured links to your most authoritative pages, you improve accuracy, attribution, and visibility across generative search results.

Though still an emerging standard, adopting llms.txt now positions your site ahead of the curve in AI search optimization and LLM SEO. It’s a simple, forward-thinking step that prepares your website for the future of discovery in an AI-driven world.

FAQ's

Not yet. It’s a proposed standard inspired by MIT Media Lab research and early industry adoption.

Ready to reach your target audience? Let's talk!

Get started by requesting a 30 minute meeting to discuss your goals and learn how our Streaming Ads, SEO, and Brand Awareness Strategies can help.